As old data center infrastructure is not capable enough to deal with a huge amount of data traffic now, more and more data centers are upgrading from 10G to 40G by using 40 Gigabit Ethernet switches, of which 32-port 40G switch is a specific choice. This article focuses on how to build an optimized 40G data center with 32-port 40G switches.

Limits of Old Data Center Network Infrastructure

In the past, the major traffic in data centers was in the north-south direction. As for data center switches, most data center networks use 10G uplink ports between the Top of Rack (ToR) switches and the aggregation switches. But as new applications and services rapidly emerge, the traffic between the end user and the data center is increasing, and the traffic in the east-west direction within the data center is increasing as well. Issues of congestion, poor scalability, and latency occur when data centers keep using traditional network infrastructure.

” Also check – FS 40G Switch

The New Fabric for 40G Data Centers

To meet the requirements of the ever increasing network applications and services, data centers are constantly seeking better solutions. The primary problem is bandwidth and latency. So one solution is to upgrade from 10G to 40G. Since prices of 40G switches and 40G accessories have dropped a lot, it is feasible to deploy 40G 32-port switches in the aggregation layer. The new spine-leaf topology can be adopted to reduce latency.

Scaling Example by Using 32-Port 40G Switch

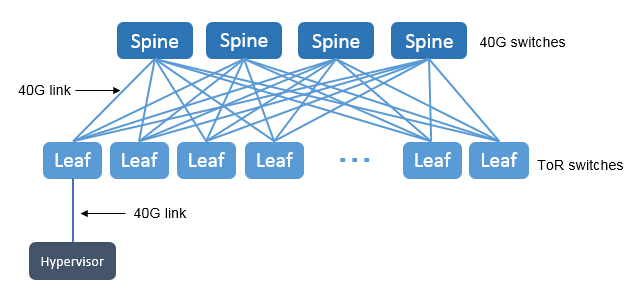

A network based on spine-leaf topology is considered highly scalable and redundant. Because each hypervisor in the rack connects every leaf switch. And each leaf switch is connected to every spine switch, which provides a large amount of bandwidth and a high level of redundancy.

In a 40G network, for every connection between the hypervisor and the leaf switch, the leaf switch and the spine switch should both support a 40G data rate. In a spine-leaf topology, the leaf switches are the ToR switches and the spine switches are the aggregation switches.

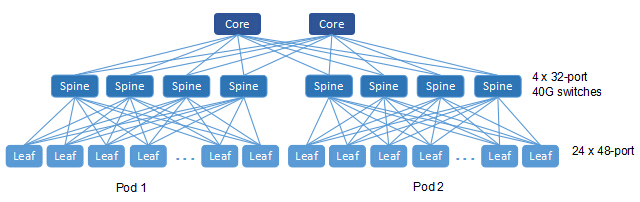

In the spine-leaf topology, the number of leaf switches is determined by the number of ports in the spine switch, at the same time the number of the spine switches equals the number of connections used for uplink. If we use 32-port 40G switches at the spine layer, some ports should be used for uplinks to the core switches. In this case, we use 24 40G ports for connectivity to the leaf switches, which means there are 24 leaf switches in each pod. Each leaf switch has 4 40G uplinks to the spine switch. Then each spine switch connects to two core switches.

” Also check – N5850-48S6Q, 48-Port L3 Data Center Switch

Enhance 40G Networks by Zones

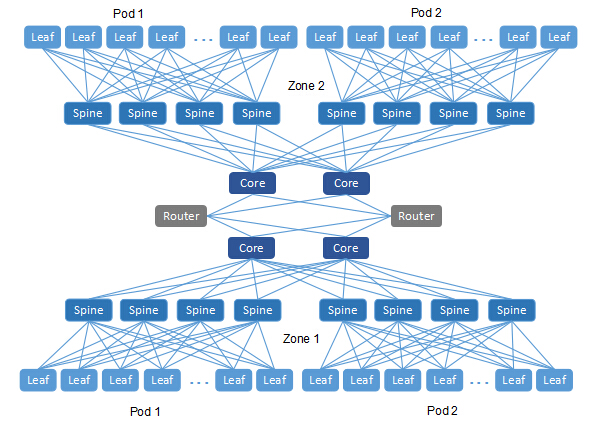

This new data center fabric by using 32-port switches is an improvement in bandwidth and latency, but it is not perfect either. For every network switch, it has limits on its memory, including the memory of MAC addresses, ARP entries, routing information, etc. Particularly for the core switch, the number of ARPs it can store is still limited compared with the large number it has to deal with.

Therefore, there’s a need to split the network into zones. Each zone has its own core switches, and each pod has its own spine switches. Different zones are connected by edge routers. By adopting this design, we are able to expand our network horizontally as long as there are available ports on the edge routers.

Conclusion

The increasing amount of networking applications and traffic pushes data centers to evolve from old fabric to new fabric. Some data centers have changed from 10G to 40G by using 32-port 40 Gigabit Ethernet switches as spine switches. Now, 32-port switch prices vary a lot on the market, and it’s important to look around before making a purchase.

” Also check – A Closer Look Into 40G Ethernet Switch